One of the articles I've been postponing to read since the beginning of this month is by Jonah Lehrer in the New Yorker: "The Truth Wears Off". In it Lehrer exposes what he calls the decline effect; how once robust scientific findings seem to lose their support as time goes and more studies are done.

But now all sorts of well-established, multiply confirmed findings have started to look increasingly uncertain. It’s as if our facts were losing their truth: claims that have been enshrined in textbooks are suddenly unprovable. This phenomenon doesn’t yet have an official name, but it’s occurring across a wide range of fields, from psychology to ecology. In the field of medicine, the phenomenon seems extremely widespread, affecting not only antipsychotics but also therapies ranging from cardiac stents to Vitamin E and antidepressants...

I recommend that you read the article for some fascinating perspectives on for instance face-symmetry and attraction, acupuncture, and disease-risk differences between men and women, all hotly debated and popular subjects. But I think there's a danger that his focus gives the wrong impression of what we can realistically expect from science.

I've commented on one of Jonah Lehrer's articles before in a post called "How scientists fail and succeed". Lehrer is trained in neuroscience and his field of expertise is our cognitive shortcomings, those situations when our minds might "misfire" and lead us to make the wrong decisions or make incorrect conclusions about the world. He is almost infallibly a great writer, and when he applies his perspective on science itself, the results are particularly sharp and worthy of attention. The article I commented on almost exactly one year ago exposed how scientists often overlook those unexpected findings that don't confirm their initial hypothesis in favor of those that do.

Results only rarely confirm the hypotheses that you set out to confirm, and a great amount of data are either conflicting or make no sense at all. Scientists, it seems, are just as ready as anyone else to reject those observations that conflict with their own preconceptions. The question I'm left with is if this really is so surprising, or a "failure" of the scientific process.

Even as I agreed with all the premises and conclusions presented in the article, I seemed to want to focus more than Lehrer did on the fact that the scientific method in itself offers a solution to this, and that the "problem" might in actual fact point towards a strength in the scientific process. I can say the same thing about his latest article about the decline effect. From the sub-title of the article "Is there something wrong with the scientific method?" and the overall tone, you'd be led to believe that the scientific method is in crisis and that we are going towards an increasingly post-modernistic future where we cannot even trust the laws of gravity anymore.

Such anomalies demonstrate the slipperiness of empiricism. Although many scientific ideas generate conflicting results and suffer from falling effect sizes, they continue to get cited in the textbooks and drive standard medical practice. Why? Because these ideas seem true. Because they make sense. Because we can’t bear to let them go. And this is why the decline effect is so troubling. Not because it reveals the human fallibility of science, in which data are tweaked and beliefs shape perceptions. (Such shortcomings aren’t surprising, at least for scientists.) And not because it reveals that many of our most exciting theories are fleeting fads and will soon be rejected. (That idea has been around since Thomas Kuhn.) The decline effect is troubling because it reminds us how difficult it is to prove anything. We like to pretend that our experiments define the truth for us. But that’s often not the case. Just because an idea is true doesn’t mean it can be proved. And just because an idea can be proved doesn’t mean it’s true. When the experiments are done, we still have to choose what to believe.

So? I'm a bit underwhelmed at Lehrer's conclusion. This is only a problem if you have the wrong idea about what it is that science actually does. Science and the scientific method are not about discovering "truth" or "proving" anything in any objective sense, the whole process is instead geared towards quantifying doubt and in fact generating more doubt than you had starting out. I hope that when Lehrer writes that "We like to pretend that our experiments define the truth for us", he's only speaking for himself.

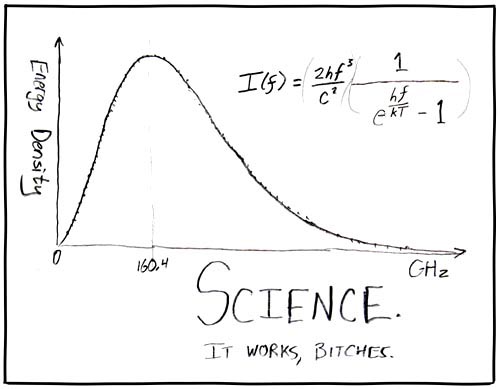

It seems to me he's painting up this huge chimera of a problem instead of identifying the really interesting problem that the decline effect exposes: Why is the mistaken idea that science is supposed to generate "truths" so difficult to shake? This mistaken idea is what underlies "weak" findings being cited in textbooks and driving medical practice, it's also what lies behind much of the financial and personal interests that can produce the decline effect in the first place. The real challenge is for science communicators and educators to change the perception of what it is that science realistically can tell us and why it's still the best option we have when we want to "choose what to believe", as Lehrer puts it in his concluding remark. It's still the best option not only when we want to generate knowledge about ourselves and our surroundings, but also when we want to convert that knowledge into applicable solutions to the problems we face. Science works, decline effect or not. I'm convinced that Jonah Lehrer knows this, but the decline effect points to the scientific method's re-evaluative strength more than to its subjective weakness, which is where I think we disagree.

Source: xkcd

PZ Myers has an excellent summary over at Pharyngula that lists some of the underlying causes of the decline effect presented by Lehrer in the New Yorker piece, and gives some perspective on how the practice of science takes place.

No comments:

Post a Comment

Note: Only a member of this blog may post a comment.